Deep Learning: Foundations, Tools, Careers & Interview Guide (2026)

Deep learning has transformed how machines understand language, images, sound, and decision-making. From NLP systems and computer vision to reinforcement learning agents, deep neural networks now power modern AI applications.

This article covers:

What deep learning is (vs. machine learning & classical NLP)

Key foundations and concepts

Popular tools like PyTorch & Hugging Face

Deep reinforcement learning (TAMU context)

Workstations for deep learning

Unsupervised learning & thermodynamics

Performance engineering in deep learning

Top deep learning interview questions

1️⃣ What Is Deep Learning?

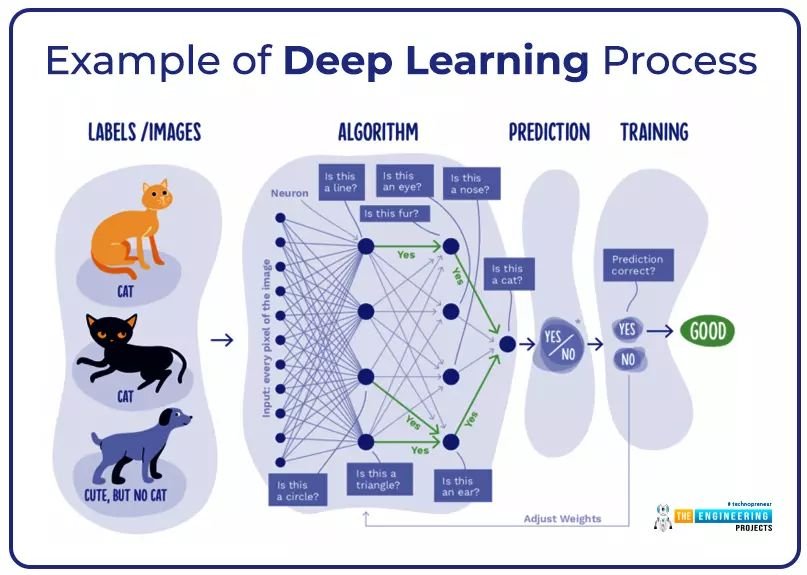

Deep learning is a subset of machine learning that uses multi-layer neural networks to automatically learn features and perform tasks like classification, prediction, and generation.

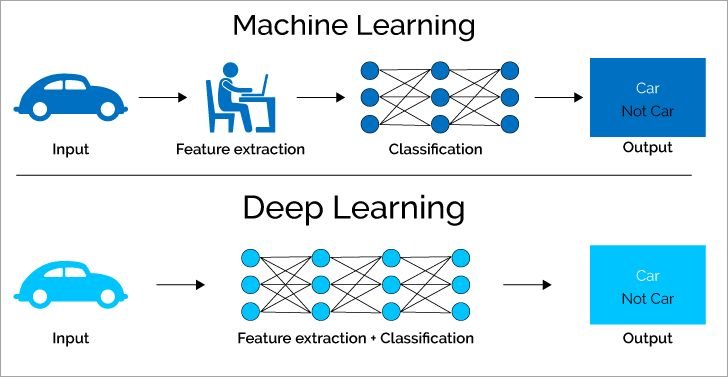

🔹 Machine Learning vs Deep Learning

Traditional Machine Learning:

- Manual feature extraction

- Separate feature engineering + classification

- Works well on structured data

Deep Learning:

- Automatically learns features

- End-to-end training

- Excels in images, text, audio, and large datasets

Example:

- ML: Extract image edges manually → classify as “Car / Not Car”

- DL: Neural network learns edges, shapes, and patterns automatically

2️⃣ Deep Learning in NLP

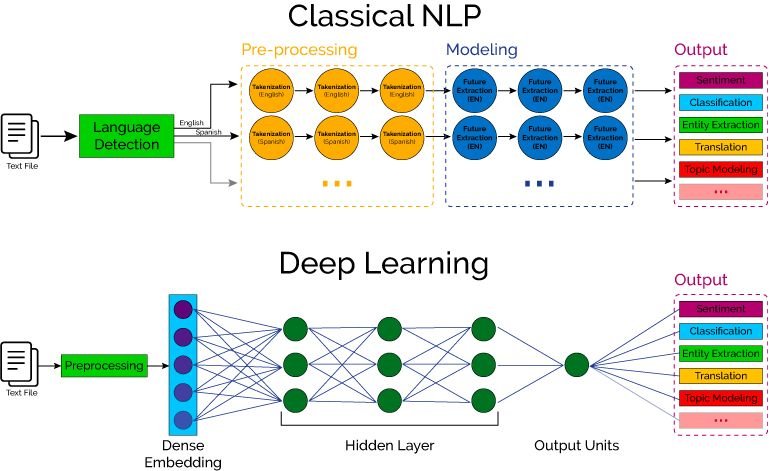

🔹 Classical NLP Pipeline

- Language detection

- Tokenization

- Feature engineering (TF-IDF, n-grams)

- Modeling

- Output (sentiment, translation, topic modeling)

🔹 Deep Learning NLP

- Dense embeddings

- Hidden layers (LSTM, CNN, Transformer)

- Output layer (classification, translation, entity extraction)

Modern NLP relies heavily on transformer-based models such as:

- BERT

- GPT

- RoBERTa

These are often implemented using:

- PyTorch

- Hugging Face

3️⃣ Deep Learning with PyTorch & Hugging Face

🔹 PyTorch

Developed by Meta, PyTorch is:

- Dynamic computation graph

- Python-friendly

- Popular in research

🔹 Hugging Face

Provides:

- Pretrained transformers

- Datasets

- Tokenizers

- Model hub

Typical workflow:

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

classifier("Deep learning is amazing!")

Used in:

- Chatbots

- Text summarization

- Translation

- Question answering

4️⃣ Deep Reinforcement Learning (TAMU Focus)

At Texas A&M University (TAMU), research in deep reinforcement learning focuses on:

- Robotics

- Autonomous systems

- Multi-agent learning

- Control systems

Deep RL combines:

- Neural networks

- Q-learning / Policy gradients

- Environment interaction

Applications:

- Self-driving cars

- Game AI

- Industrial automation

5️⃣ Deep Learning: Foundations and Concepts

If you’re studying Deep Learning: Foundations and Concepts, focus on:

Core topics:

- Linear algebra (vectors, matrices, eigenvalues)

- Probability & statistics

- Gradient descent

- Backpropagation

- Activation functions (ReLU, Sigmoid, Tanh)

- Loss functions

- Regularization (Dropout, L2)

- Optimization (Adam, SGD)

Advanced:

- CNNs

- RNNs

- Transformers

- Attention mechanisms

- Diffusion models

6️⃣ Deep Unsupervised Learning Using Nonequilibrium Thermodynamics

This refers to the groundbreaking paper by Jascha Sohl-Dickstein.

It introduced the foundation of diffusion probabilistic models, which later influenced:

- DALL·E

- Stable Diffusion

- Modern generative AI

Key idea:

- Gradually add noise to data

- Learn to reverse the noise process

- Generate realistic samples

This concept reshaped generative AI.

7️⃣ Deep Learning Workstation: What You Need

For serious deep learning work:

🔹 Minimum Setup

- GPU: RTX 3060+

- 32GB RAM

- 1TB NVMe SSD

🔹 Advanced Setup

- RTX 4090 or A6000

- 64–128GB RAM

- Multi-GPU setup

- Linux OS (Ubuntu recommended)

Cloud alternatives:

- AWS

- GCP

- Paperspace

- Lambda Labs

8️⃣ Performance Engineer – Deep Learning Role

A Deep Learning Performance Engineer focuses on:

- GPU optimization

- Memory profiling

- Distributed training

- Mixed precision (FP16, BF16)

- CUDA kernel tuning

- Model quantization

- Inference optimization (TensorRT, ONNX)

Skills required:

- CUDA

- PyTorch internals

- Profiling tools (Nsight)

- Parallel computing

Salary range (US): $130k–$200k+

9️⃣ Top Deep Learning Interview Questions

🔹 Basic

- What is backpropagation?

- Why use ReLU?

- What is vanishing gradient?

- Difference between CNN and RNN?

🔹 Intermediate

- Explain batch normalization.

- What is attention mechanism?

- How does Adam optimizer work?

- What is overfitting and how to prevent it?

🔹 Advanced

- Explain transformer architecture.

- What is diffusion model?

- How would you scale training to multiple GPUs?

- Difference between model parallelism and data parallelism?

🔟 Career Paths in Deep Learning

- AI Researcher

- ML Engineer

- NLP Engineer

- Computer Vision Engineer

- Deep RL Researcher

- Performance Engineer

- MLOps Engineer